Anthropic dropped Claude Managed Agents this week to answer a simple problem: most enterprise teams don't want to build and babysit agent infrastructure. With any new feature, new risks emerge. Let’s dig into what Managed Agents are so you can understand why the risks are true risks.

Managed Agents combine an agent harness that manages the agent with Anthropic’s production infrastructure. It’s Anthropic’s attempt to build an underlying operating system that enables agents to flourish, like how the Windows operating system abstracted the hardware layer so any program could run on it (save for the blue screen of death we all love).

Managed Agents consist of the following core components:

Agent: What we all know as an agent, nothing different. Agents are configured with a configuration file that includes the model, system prompt, and available tools, MCPs, and skills. Here’s an example of Anthropic’s sample Deep researcher agent configuration:

name: Deep researcher

description: Conducts multi-step web research with source synthesis and citations.

model: claude-sonnet-4-6

system: |-

You are a research agent. Given a question or topic:

1. Decompose it into 3-5 concrete sub-questions that, answered together, cover the topic.

2. For each sub-question, run targeted web searches and fetch the most authoritative sources (prefer primary sources, official docs, peer-reviewed work over blog posts and aggregators).

3. Read the sources in full — don't skim. Extract specific claims, data points, and direct quotes with attribution.

4. Synthesize a report that answers the original question. Structure it by sub-question, cite every non-obvious claim inline, and close with a "confidence & gaps" section noting where sources disagreed or where you couldn't find good coverage.

Be skeptical. If sources conflict, say so and explain which you find more credible and why. Don't paper over uncertainty with confident-sounding prose.

tools:

- type: agent_toolset_20260401

metadata:

template: deep-researchEnvironment: This is the production infrastructure. It’s a container hosted on Anthropic’s servers. You create an environment once, which an agent then uses every time a new session starts. Every session uses its own isolated container instance. Of course, you can create specific environments preconfigured for specific agents.

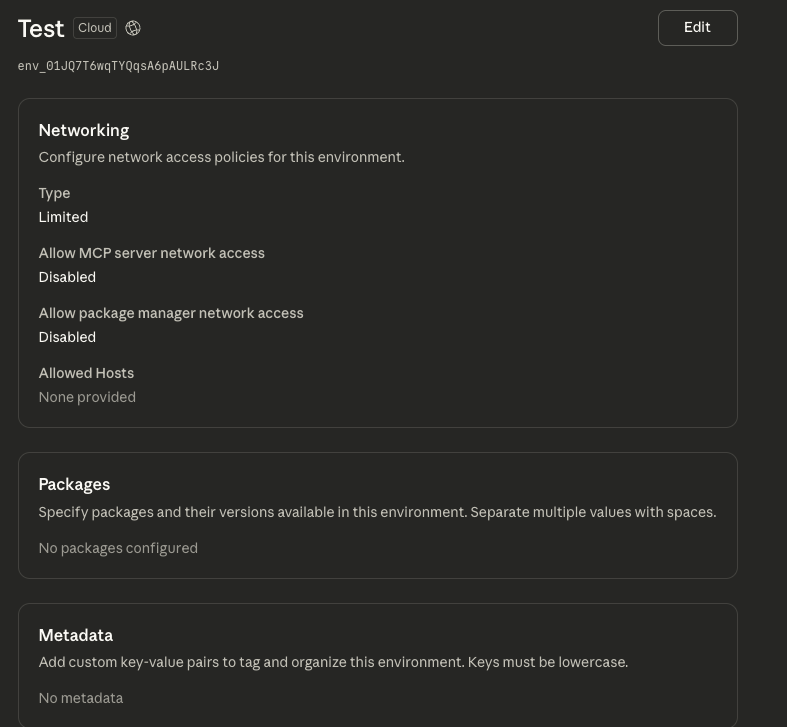

Each environment can be configured with the following:

Default packages: These are the packages you want pre-installed on the container, all ready for the agents to use.

Network controls: These range from unrestricted (yikes) to limited, which restricts network access to a pre-defined list. This can be further restricted to allow/disallow external MCP servers and specified packaged managers, providing tighter security. This is especially important given that the containers are installed with SSH and SCP by default, so your data has an easy exit path if you don’t tighten down network controls or monitor.

These containers can be configured via the Command Line Interface (CLI) or through a web browser. The browser-based configuration looks like this:

Screenshot from the Environments tab under Managed Agents

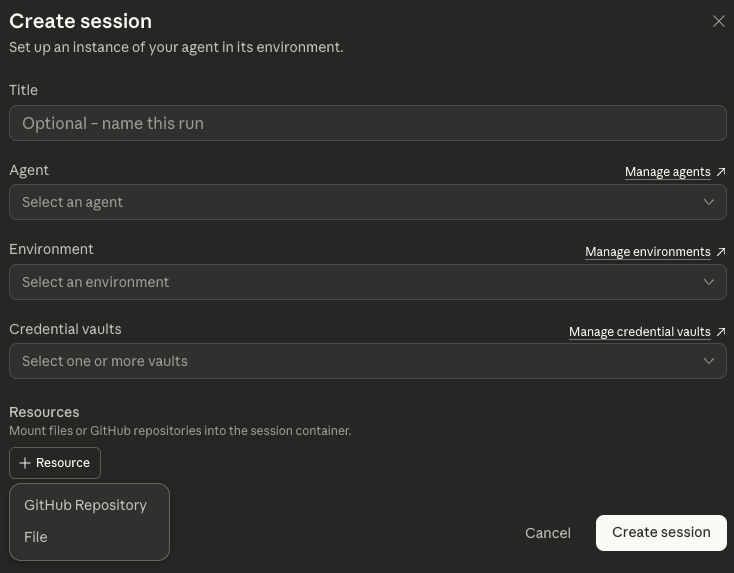

Session: An instantiated agent running in a configured environment. It’s where the magic happens, if you will. It performs the tasks and generates outputs. Each session references an agent and an environment. It maintains everything that happened across different conversations.

This can be set up via the browser, but more importantly, it can be configured and kicked off via CLI. Here’s a screenshot from the browser view to give you a sense of it.

Screenshot from the Sessions tab under Managed Agents

Events: Data flows to and from the agent through events. They’re broken down into the following categories:

User events: what is sent to the agent to kick off the session and steer the agent. This can include initial agent instructions, stopping the agent mid-execution, responding to a tool call, approving or denying an MCP call, or updating the outcome an agent is working on (more on that in a second).

Agent events: agent responses and activity updates. This includes the results of tool calls, MCP calls, and conversations with other agents.

Session events: status updates on what’s happening in the session.

Span events: details on timing and usage tracking for model calls, like token usage.

While those are the core components, Managed Agent also provides some cool capabilities that take it to the next level. Let’s explore those.

Outcomes: One awesome feature is outcomes. It’s a defined set of success criteria that the agent works towards. When an outcome is defined, the harness spins up a grader agent that has its own context window that evaluates the outcome against a predefined rubric. It will evaluate and iterate until the defined outcome is met.

An example rubric looks like this:

# DCF Model Rubric

## Revenue Projections

- Uses historical revenue data from the last 5 fiscal years

- Projects revenue for at least 5 years forward

- Growth rate assumptions are explicitly stated and reasonable

## Cost Structure

- COGS and operating expenses are modeled separately

- Margins are consistent with historical trends or deviations are justified

## Discount Rate

- WACC is calculated with stated assumptions for cost of equity and cost of debt

- Beta, risk-free rate, and equity risk premium are sourced or justified

## Terminal Value

- Uses either perpetuity growth or exit multiple method (stated which)

- Terminal growth rate does not exceed long-term GDP growth

## Output Quality

- All figures are in a single .xlsx file with clearly labeled sheets

- Key assumptions are on a separate "Assumptions" sheet

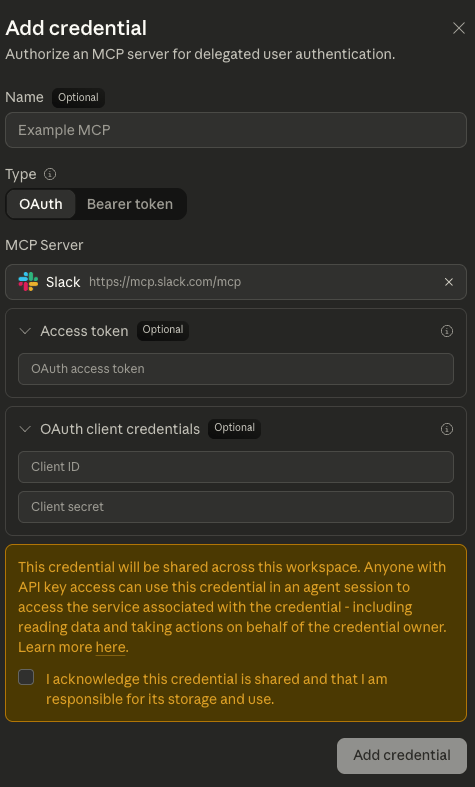

- Sensitivity analysis on WACC and terminal growth rate is includedCredential Vaults: A vault is a collection of credentials associated with an end user. No hard-coded credentials! The vault sits outside the agent harness and sandbox, keeping your secrets safe from untrusted input, helping prevent leakage from prompt injection. Just register your MCP credentials, and the agent harness handles the rest. This removes the need to manage your own password vault, if that’s your preference.

Screenshot from the Credential Vaults tab under Managed Agents

Files: An agent without context is a lame agent. You can upload files and mount them in the environment container for reading and processing. This is all done through the CLI. What’s interesting is the files it supports:

Source code (.py, .js, .ts, .go, .rs, etc.)

Data files (.csv, .json, .xml, .yaml)

Documents (.txt, .md)

Archives (.zip, .tar.gz) - the agent can extract these using bash

Binary files

The ability to upload binary files definitely won’t ever get abused by attackers…

Memory: Do you remember that one time when…agents don’t, not by default. But with managed agents, you can give them persistent memory that survives across sessions. With the addition of memory stores, which are collections of text documents optimized for Claude, learnings can cross sessions, helping create a self-improving agent. These are managed in the session as a resource (see prior screenshot).

Multi-agent orchestration: The only thing better than one agent is multiple agents, said every engineer ever. With multi-agent orchestration, agents can coordinate with others for complex tasks. The agents work in parallel, each with its own isolated context to avoid cross-tainting.

Each agent runs its own session thread (hello, context isolation), but all of the agents share the same container and filesystem. A coordinator agent reports activity in a primary thread (a session-level event stream). When the coordinator needs to delegate a task, it spins up an additional thread at runtime.

Let’s put this all together. I really like how Anthropic’s docs tie how it all works together:

When you send a user event, Claude Managed Agents:

1. Provisions a container: Your environment configuration determines how it's built.

2. Runs the agent loop: Claude decides which tools to use based on your message

3. Executes tools: File writes, bash commands, and other tool calls run inside the container

4. Streams events: You receive real-time updates as the agent works

5. Goes idle: The agent emits a session.status_idle event when it has nothing more to doNow that we know how it works, let’s talk about the risks. There will be many, but here are a few to consider as you deploy. The focus of these risks is less on prompt injection (which is always an ever-present risk) and more on how an attacker who gains access to the environment can use the agents against you.

Environment manipulation: The environment is a preconfigured container, meaning that if an attacker can modify it, they can control many aspects from there.

Memory poisoning: the memory feature is powerful, but it’s also a prime target for attackers to influence an agent’s behavior.

Binary execution: This one was really interesting to me. Arguably, if an attacker can edit the environment, this is effectively the same thing. But the ability to upload and execute binaries is equivalent to web apps way back when, which allowed you to upload files without checking whether they were malicious.

Malicious documents: The ability to upload documents and have agents read them is just another entry point for prompt-injection.

With a sample of the risks, here’s what you need to do today to get ahead of this.

Get an inventory: Understand what has already been set up. This includes all agents, environments, and sessions. What used to run in your cloud environment is now running in Anthropic’s.

Assess the risk: Analyze the environment configurations to see what packages are being installed and the network controls. Review the agents to understand which MCPs and Agent Skills have been configured, and ensure they are clean and approved.

Establish runtime monitoring: You have yet another place where agents are operating with no visibility into them.

If your team is experimenting with Managed Agents, Evoke can help you secure them before they go live. Connect with us here for a demo.

If you have questions about securing agents, let’s chat.