In nearly 15 years of business travel, I had my first mid-flight diversion. But not for any cool reason (if there ever is one). It was just weather shutting down the Dallas Forth Worth airport. This brings my despise of the DFW airport to a level that can only be described with a word as pompous as rancorous.

On the bright side, I watched a nice sunset from the plane as we landed in Oklahoma City and learned an adorable dog was on board. So, I guess it balances out.

Anyways, today, in cyber and AI we’re covering:

ChatGPT can be depressing

How to hack a ransomware gang

A rare glimpse into how China is using LLMs to support censorship

-Jason

p.s. no big deal, just questioning the meaning of time and that there could be no “now.”

AI Spotlight

ChatGPT can be depressing

OpenAI and MIT’s Media Lab did a joint research project to understand the impact that AI models like ChatGPT have on humans. And the results are…depressing.

The research included two studies. The first study analyzed almost 40 million ChatGPT interactions and overlaid that with targeted user surveys to understand how the user felt towards ChatGPT.

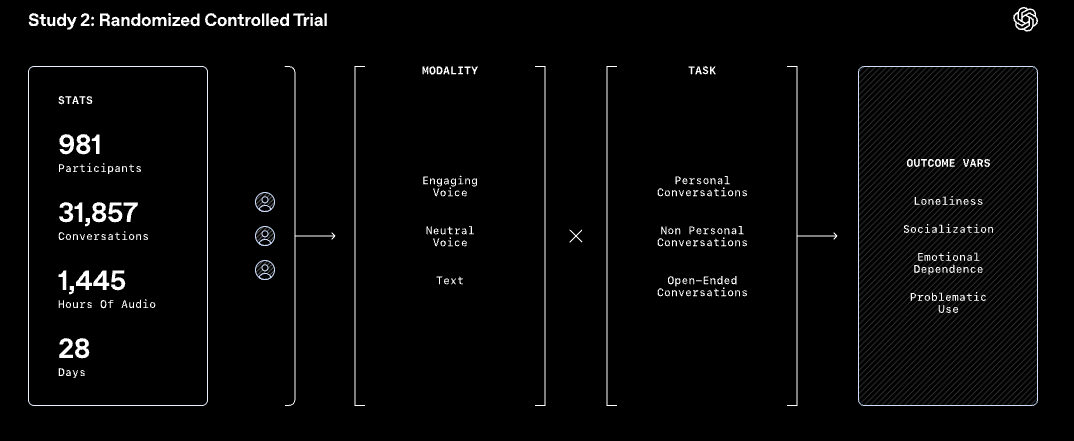

The second study tested various modalities, including an engaging voice, a neutral voice, and just text, to understand the impact on dependence and emotional attachment.

For most users, they use ChatGPT for what it is. A tool. No dependency is formed, no sad feelings. They’re just using ChatGPT to help them with specific tasks. That’s a positive.

But then, there are the power users. And this is where it starts to get sad. These users were building a relationship with ChatGPT.

OpenAI found that “heavy users are more likely to have affective cues in their interaction with ChatGPT” and that “Users who describe ChatGPT in personal or intimate terms (like identifying it as a friend) also tend to have the model use pet names and relationship references more frequently.”

Interestingly, the study found that more affective cues occurred in text interactions than in voice mode. Perhaps that’s because it would creep people out to hear themselves swooning out loud about their AI friend.

While all of that is interesting, there’s a sadder part to this. The heaviest users were found to have a growing dependence on ChatGPT the more they used it. And the more they used it, the lonelier they felt. The lonelier they felt, the lower their socialization was in the real world. You can see a spiral effect starting here.

If you know a heavy ChatGPT user…check in on them.

Hug them.

Then, delete their ChatGPT account and take them out outside.

Security Deep Dive

How to hack a ransomware leak site

Security researchers at Resecurity went for extra credit and hacked into the Blacklock ransomware group’s data leak site. I’m not here to argue the law or ethics, but sometimes security researchers will do certain things to get certain access against certain people who aren’t going to call the authorities.

How they hacked them is pretty embarrassing. It started with a path traversal vulnerability. This allows attackers (or security researchers) to read files from the server that they shouldn’t be able to.

In this case, it was as easy as adding a lot of “../../” to the URL and entering the file name they wanted to read.

Most leak sites live on Tor onion sites. The benefit of that is that it hides the server's actual location and network information. Now, that benefit only matters if the server doesn’t have a basic vulnerability. If you’re unsure what a Tor onion site is, check out a previous newsletter for more information on how onion sites work.

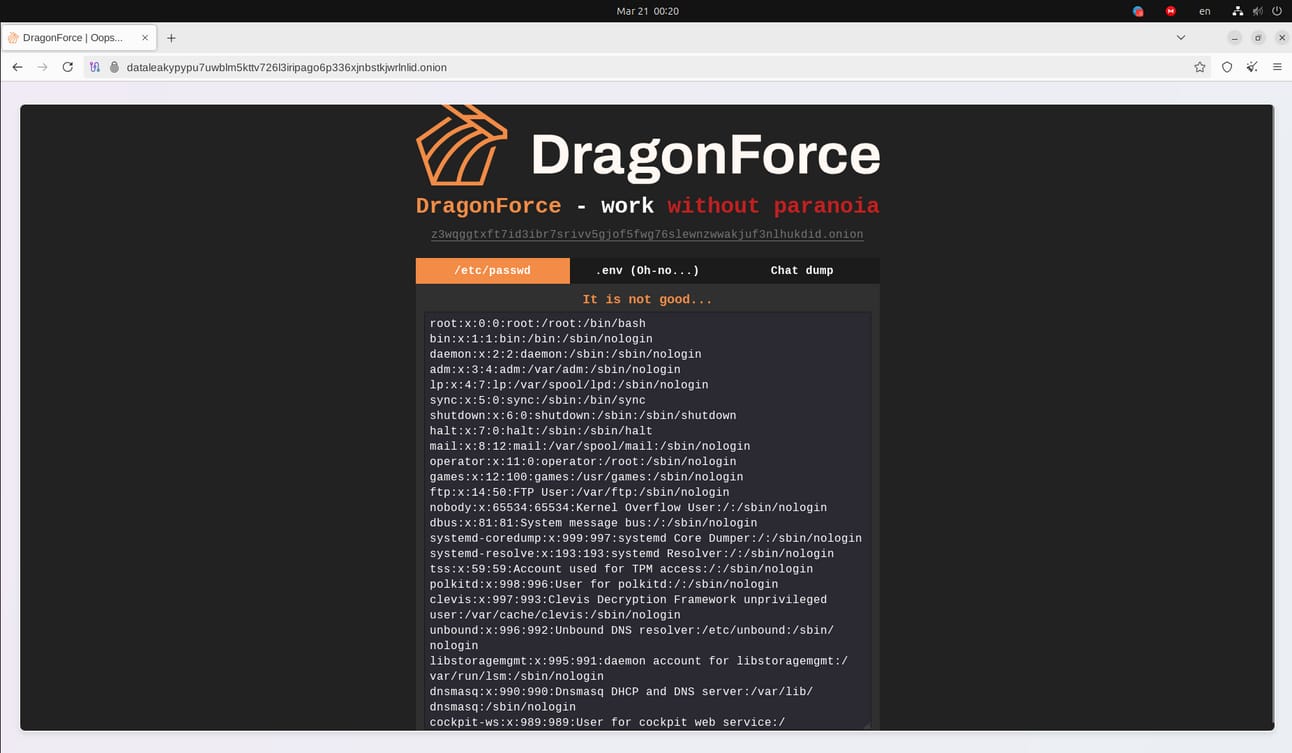

In the screenshot below, that file was the “/etc/hosts” file, which stores information on mapping hostnames to IP addresses. This allowed the security researchers to find the IP address of the server.

That same path traversal vulnerability allowed the security researchers to pull sensitive files, including a listing of users in the “/etc/passwd” file, encrypted passwords in the “/etc/shadow” file (which the researchers cracked), and best of all, a history of all the user’s commands stored in “.bash_history” files.

The “.bash_history” file is always a gold mine of information because it has everything the user did. When I did forensics, it was one of the first places I would look to help me understand what the user was doing.

In this case, it included the attacker entering the password for an account when, for some reason, they wanted to get an MD5sum of the root account’s password.

echo root209370293683jkynrnh,d | md5sum With passwords in hand and the server's IP address ready, the security researchers should have been able to log into the server. But the attacker did one smart thing. They protected access to the server with a digital certificate, which you would need to connect to the server. So, the security researchers just downloaded that from the server using the same vulnerabilities. So, not a very effective defense after all.

With full access to the ransomware leak site, the security researchers could monitor activities. This included alerting victims before their data was posted to the leak site. Two examples of these are:

Alerted the Canadian Centre for Cyber Security of a Canadian victim 13 days before publication

Alerted CERT-FR about a French victim two days before publication

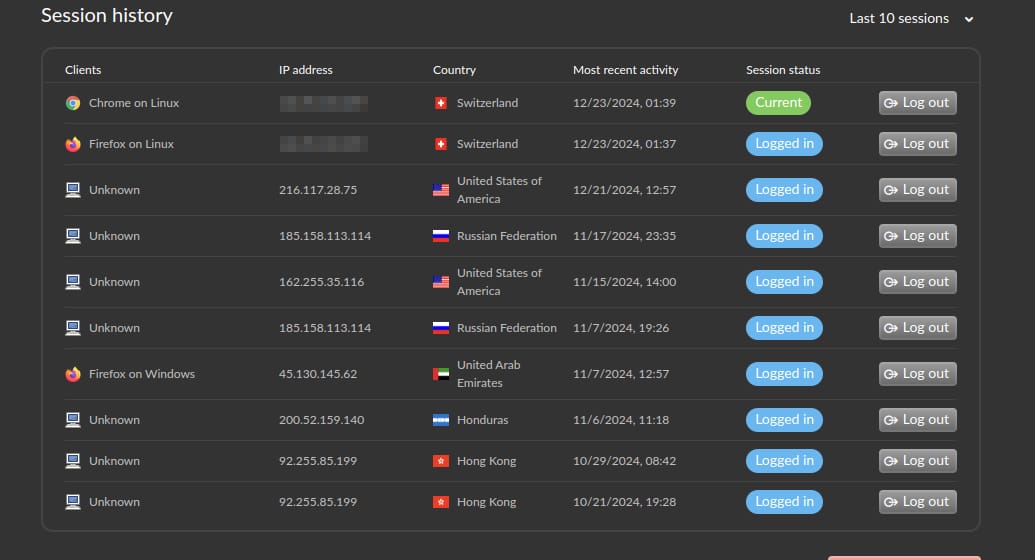

They could also monitor attacker logins to the server. A quick lookup of those IP addresses shows they map back to sketchy hosting providers and what could be compromised businesses.

Of course, when one security researcher finds a vulnerability, others aren’t far behind. And that includes rival ransomware gangs.

The DragonForce ransomware gang defaced the BlackLock leaksite. What a shame.

Security & AI News

What Else is Happening?

😕 China has been known to build ghost cities, and now that trend is extending to AI data centers. As AI boomed, China built many data centers to support computing needs. Now, up to 80% of that computing power remains unused.

📚 A hacker published information on over 3 million NYU students dating back to 1989. Of all the places to post it, the hacker opted to post it on NYU’s own website, which had the student’s information on it for nearly two hours before the university took back control of this website.

🌵 Phishing simulations are fun for adults and kids. France just completed a phishing simulation test that went to 2.5 million middle and high school students in a weirdly named operation called Operation Cactus. Just over 8% of the students clicked on the link, which purported to get you free pirated video games.

🇨🇳 This is a rare glimpse into how China uses LLMs to support its censorship machine. While the outcomes are not ideal, LLMs are a perfect tool to automate China’s objective for monitoring and controlling information that is posted online.

If you enjoyed this, forward it to a fellow cyber nerd.

If you’re that fellow cyber nerd, subscribe here.

See you next week, nerd!